QAI-h1290FX

一款支持 NVIDIA® GPU、U.2 NVMe SSD 和 25GbE 连接的 GPU 就绪边缘 AI 存储服务器,专为本地 AI、虚拟化和高计算负载设计。

QAI-h1290FX 是一款桌面级边缘计算与存储融合服务器,结合高性能计算架构与高速存储。支持可配置的 NVIDIA® RTX™ PRO Blackwell GPU,较为适合本地 AI、LLM 推理、私有 RAG 搜索、虚拟化及其他高需求计算任务。

由 QuTS hero 和 ZFS 文件系统驱动,平台提供企业级数据完整性和稳定性能。无论用于 AI 部署、研发、高性能计算还是企业虚拟化环境,QAI-h1290FX 都能实现灵活配置和快速部署,确保关键任务在边缘安全高效运行。

GPU 就绪架构,支持 RTX PRO Blackwell

采用 GPU 就绪设计,支持 NVIDIA® RTX™ PRO Blackwell GPU,包括 RTX PRO 6000 Blackwell Max- Q 工作站等选项,满足 AI 任务、图像生成、推理及 GPU 加速计算需求。

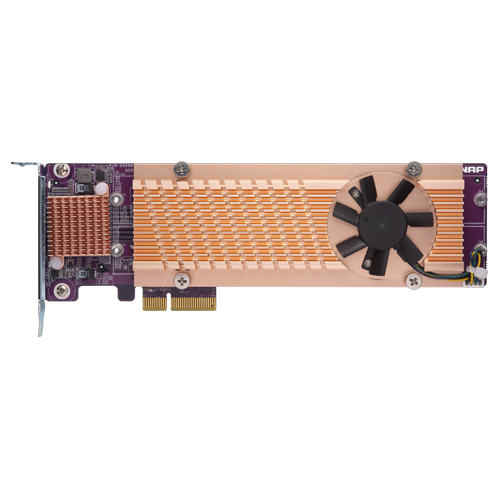

高速全闪 NVMe 存储架构

配备 12 个 U.2 NVMe SSD 盘位,并支持 SATA SSD,可灵活配置存储,优化性能、容量或成本。适用于 AI 任务、虚拟化和实时数据处理。

本地 LLM 与 RAG 搜索

支持私有 LLM 和基于 RAG 的本地部署,实现安全的语义文档检索,无需将敏感数据上传至云端。

基于 ZFS 的 QuTS hero 操作系统

由 QuTS hero 和 ZFS 驱动,提供在线压缩、自修复、快照及 SnapSync,保障企业级数据完整性。

GPU 加速与 AI 应用模板

通过容器工作站实现 GPU 加速。一键部署 Ollama、AnythingLLM、Stable Diffusion 等,简化 AI 应用上线流程。

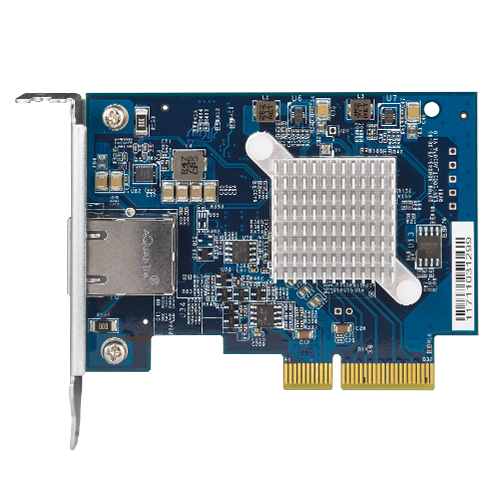

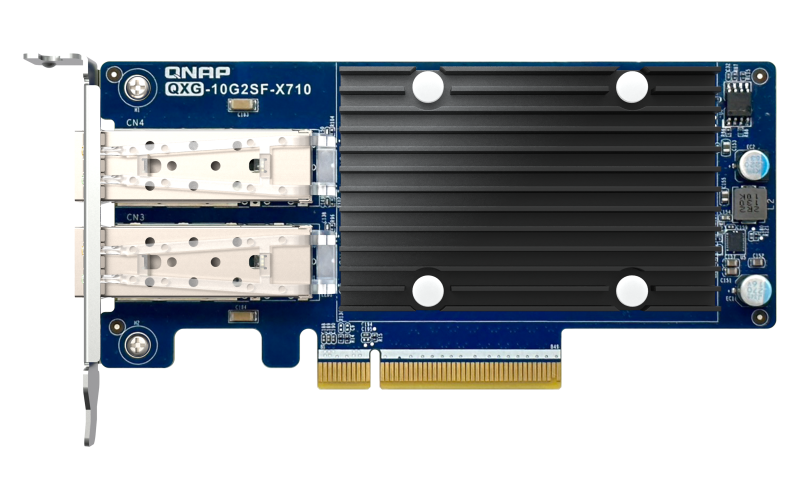

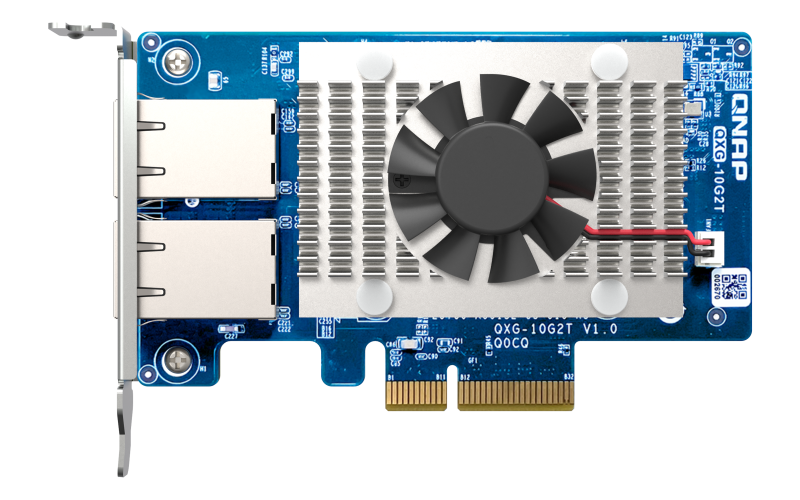

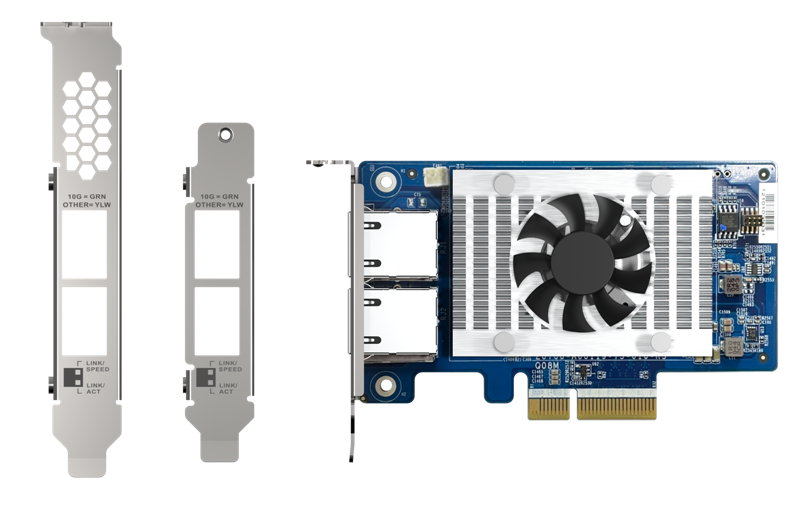

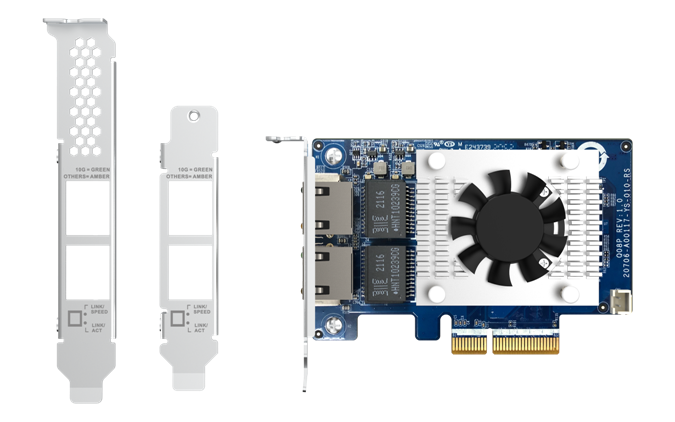

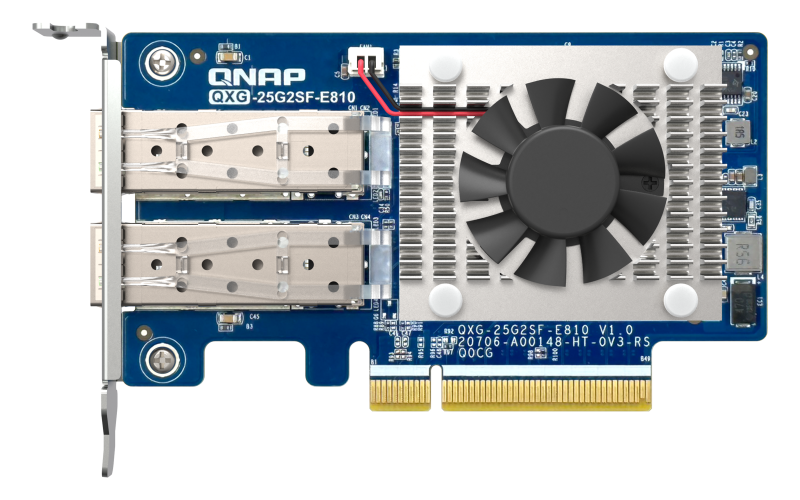

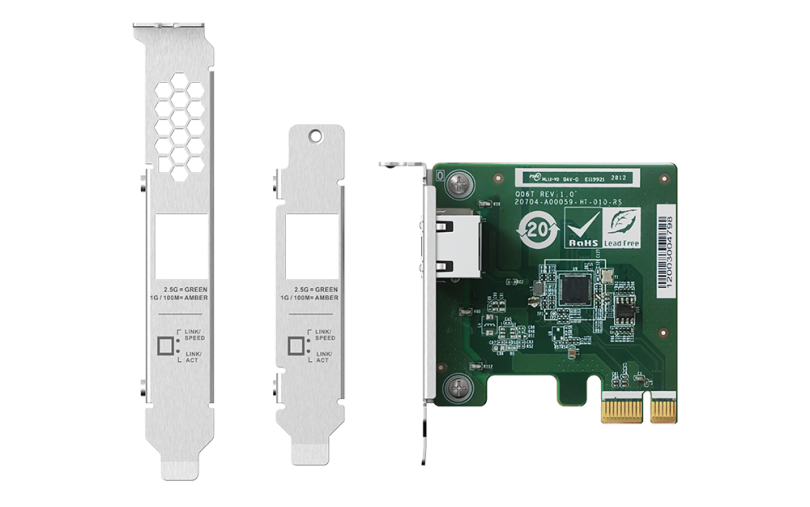

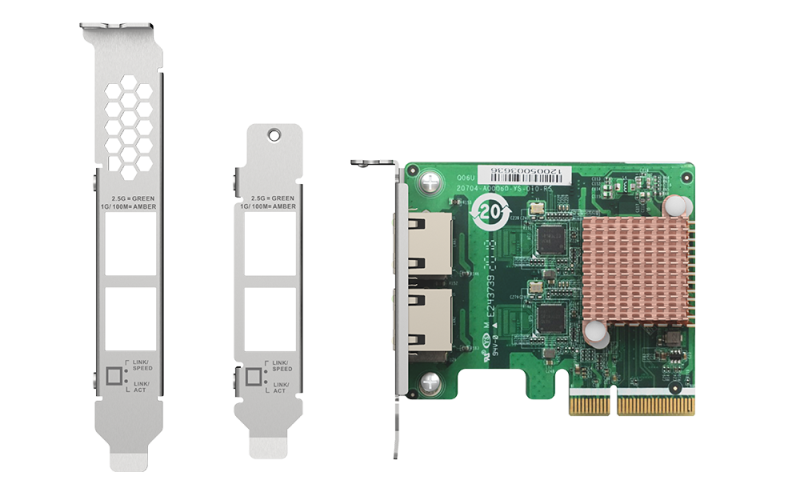

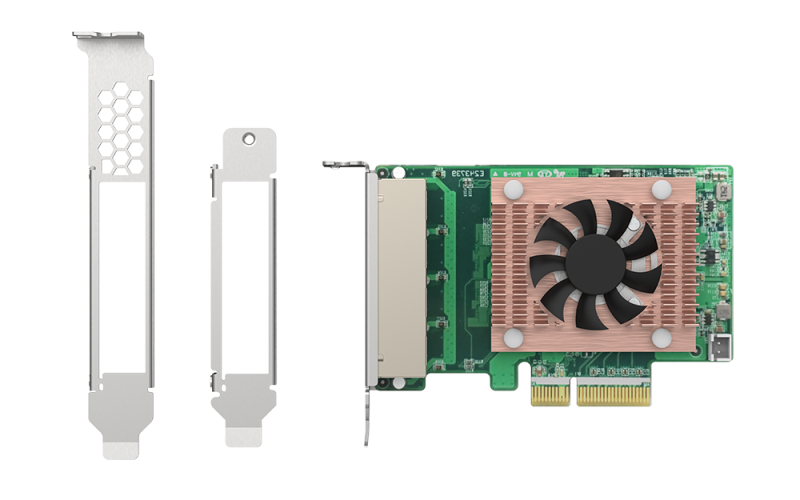

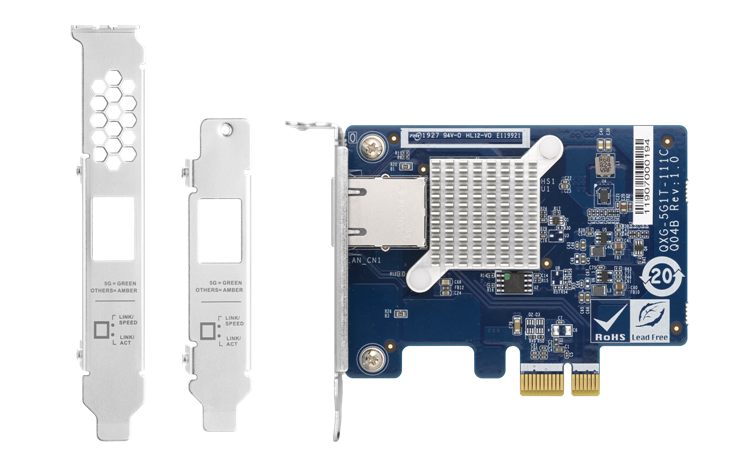

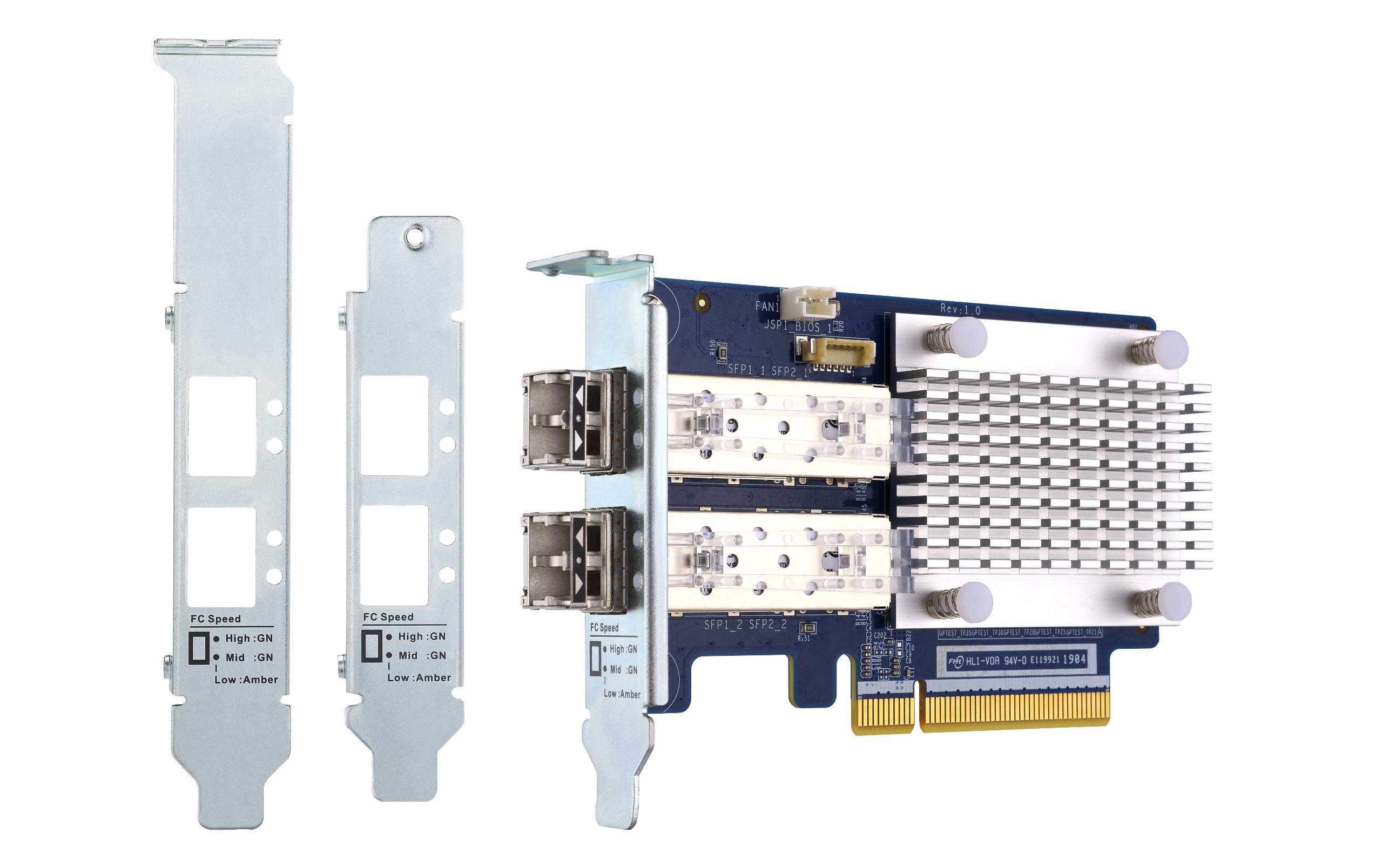

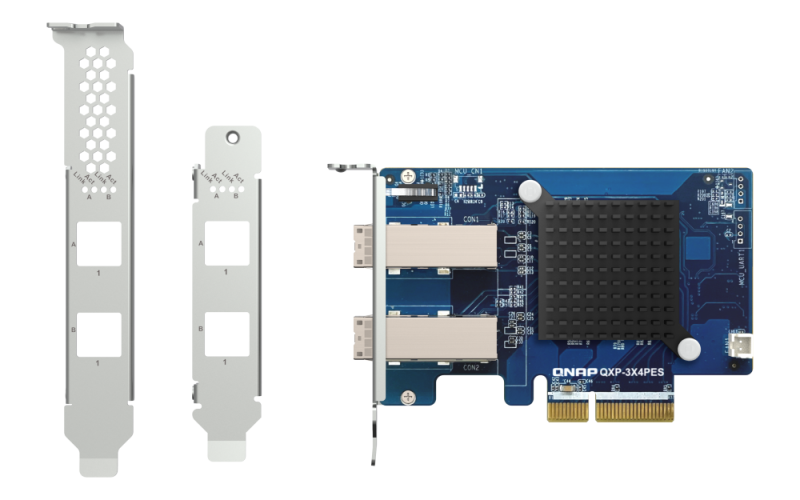

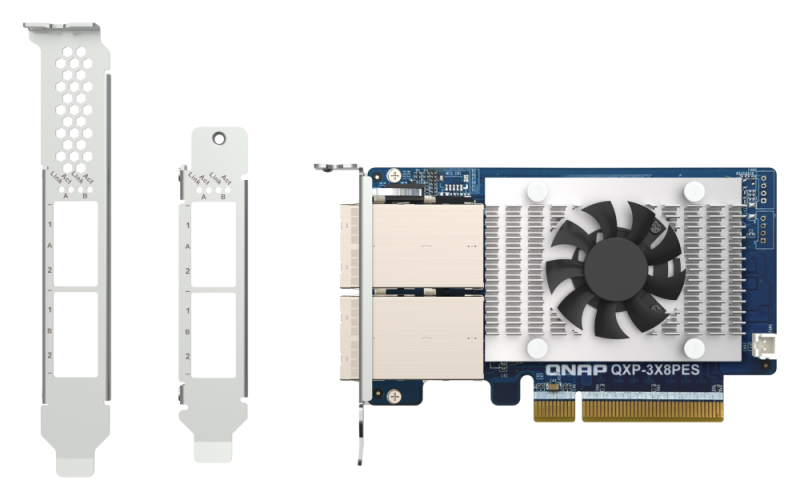

25GbE 连接与扩展支持

内置双 25GbE 和 2.5GbE 端口,并可升级至 100GbE。通过 QNAP JBODs 扩展,满足不断增长的 AI 数据 存储需求。

QNAP QAI-h1290FX

TechRadar Pro Picks Awards CES 2026 获奖产品

QAI 适用场景

企业级边缘 AI 与高性能计算

QAI-h1290FX 不仅是存储系统,更是具备计算能力的企业级边缘计算平台。基于高性能计算架构,支持可配置的 NVIDIA® RTX™ Pro Blackwell GPU,适用于大语言模型(LLM)推理、图像生成、RAG 搜索以及多种计算密集型和虚拟化工作负载。

无论用于 AI 推理、研发、数据分析,还是企业应用需要高核心数和持续性能,单台桌面级企业平台即可实现出色的计算效率和数据 安全性,全部在本地完成。

高效 AI 计算性能(可选 GPU 配置)

GPU 就绪架构 — 支持 NVIDIA® RTX™ Pro Blackwell

QAI-h1290FX 采用 GPU 就绪架构,专为支持 NVIDIA® RTX™ Pro Blackwell GPU 设计。基于 Blackwell 架构,支持 CUDA、TensorRT 和 Transformer Engine 等加速技术,适用于现代 AI 和 GPU 加速计算工作负载。

从大语言模型(LLM)推理、计算机视觉到生成式 AI 及其他 GPU 加速专业应用,工作负载可在本地部署和执行,实现出色性能,同时保障数据隐私和完整系统控制。该平台还可作为以 CPU 为中心的高性能计算系统,支持虚拟化及多种企业计算场景。

NVIDIA® RTX™ Pro Blackwell 系列 — 重新定义 AI 与高性能计算工作流程

NVIDIA® RTX™ Pro Blackwell 系列 GPU 专为高强度 AI、计算和创意工作负载打造,结合新一代 Blackwell 架构与超快 GDDR7 ECC 内存。单块专业 GPU 提供的计算性能和显存容量,以往需要多块消费级显卡才能实现。

支持较高 96GB 显存和增强的 AI 加速能力,RTX™ Pro Blackwell 系列适用于先进的 LLM、生成模型、数据分析,以及复杂的 3D 可视化和专业计算工作流程。

由服务器级 AMD EPYC™ 处理器驱动,实现高性能计算

QAI-h1290FX 基于服务器级 AMD EPYC 处理器平台,提供高核心数和强大的多线程性能。

专为高度并行工作负载下的长期稳定运行设计,适用于虚拟化、多线程计算、数据处理和边缘计算场景,同时支持 AI 推理及多种计算密集型应用。

采用 QuTS hero 操作系统

专为企业所打造的 QuTS hero 操作系统采用高可靠 ZFS 文件系统,为关键数据存储提供强悍数据安全与系统稳定,更拥有专注于提升 SSD 性能与寿命的先进技术,满足企业对于高性能与可靠度的严苛要求。探索 QuTS hero 操作系统了解 QuTS hero 最新功能

随时随地远程访问本地 AI

通过 QNAP 提供的多种远程访问选项,打造流畅的混合办公环境。无论您是在管理 AI 应用还是访问文件,QAI-h1290FX 都能确保您始终保持连接,同时不会影响安全性。

直接或中继访问选项

- myQNAPcloud DDNS:

通过自定义域名随时随地访问您的 QuTS hero 界面,无需记忆 IP 地址。 - myQNAPcloud Link:

通过 QNAP 服务器建立安全的中继连接,无需开放路由器端口或修改防火墙设置。 - VPN 服务器支持:

使用 QVPN 服务搭建专属 VPN,实现安全加密隧道,全面访问网络。

无论您是在优化 LLM 容器设置、查看推理日志,还是跨地域协作,QAI-h1290FX 都能为您的本地 AI 环境提供可靠访问,支持任何设备随时连接。

更强大的 Container Station:AI 应用部署的新体验

为了推动 AI 的实际应用,QAI 系列将 Container Station 与丰富的 AI 应用模板集成。这些模板支持热门 AI 工具和框架的一键部署,并由 QNAP 定期更新,确保能够使用全新技术。

无论你是 AI 新手还是希望将工作负载迁移到本地,QAI 都能帮助你轻松探索 AI,降低成本,提升数据 安全性,甚至开发定制 AI 工具,助力企业创新。

简化容器化 AI 部署

通过无缝容器集成,提升你的 AI 基础设施。探索容器工作站

用 AI 驱动的视觉设计重新定义创意

ComfyUI 为艺术家、设计师和内容创作者提供了强大的模块化界面,用于 AI 驱动的图像和视频创作。通过直观的节点式设计和对 Stable Diffusion 等先进模型的支持,用户可以轻松生成、转换和动画化视觉内容。结合 GPU 加速和灵活的工作流程,ComfyUI 降低了复杂视觉设计的门槛,释放了前所未有的创作自由。

真实场景下的 AI 性能——基于 QAI-h1290FX 测量

AI 部署性能通过真实场景基准测试数据进行验证。在高端 GPU 测试配置下,QAI-h1290FX 使用 NVIDIA® RTX™ PRO 6000 Blackwell Max- Q 工作站 GPU 进行了全面评估,验证其在本地 AI 推理和企业部署场景中的表现。

Ollama LLM 推理基准(快速部署)

借助 Blackwell 架构的 GPU 加速能力,QAI-h1290FX 可通过 Ollama 在本地运行多种大语言模型。

Ollama 实现快速部署和简化管理,适用于概念验证(PoC)项目、单用户环境,以及中小规模应用场景,如基于 RAG 的搜索、AI 助手和离线推理。

vLLM 并发推理基准测试(企业级吞吐量)

为满足多用户和高并发 AI 服务需求,QAI-h1290FX 也支持使用 vLLM 推理引擎进行部署。

与面向单请求的推理方式相比,vLLM 通过分页注意力和高效调度机制提升 GPU 利用率和整体吞吐量,较为适合企业 AI 服务、多用户 RAG 系统和基于 API 的 AI 应用。

在相同 GPU 配置下,vLLM 在并发请求场景中展现出更稳定的延迟特性和更高的令牌每秒吞吐量,适用于生产环境和长期企业 AI 部署。

测试的大语言模型:deepseek-ai/DeepSeek-R1-Distill-Qwen-7B(Hugging Face)

测试的大语言模型:openai/gpt-oss-20b(Hugging Face)

通过实际应用场景释放 AI 潜力

从文档自动化到创意流程及系统级自动化,QAI-h1290FX 助力各部门以有意义、可衡量的方式应用 AI——安全托管于您的本地基础设施上。

无云端绑定,无复杂配置——本地 LLM、安全容器和集成 QNAP 功能带来真实成果。

QAI-h1290FX 上的 AI Docker 应用

通过 Container Station 和 GPU 集成运行高效的 AI 解决方案。